|

I was an applied scientist at Amazon in

Madrid, where I

worked on developing computer vision and machine learning techniques to solve Amazon's catalog

image-based issues.

Before that, I did my PhD at Universidad de Zaragoza, where I

was

advised by Diego Gutierrez and Belen Masia. During my PhD, I was working on problems

at the interface between computer vision, computer graphics, and human perception.

|

|

|

I am interested in topics at the interface between computer vision and computer graphics. Those

include - but are not limited to - how can we inversely model the world i.e. acquire material

properties, light, or geometry from simple input sources (images); or how can we develop faster/

more intuitive methods to manipulate digital assets or foster artistic processes.

|

|

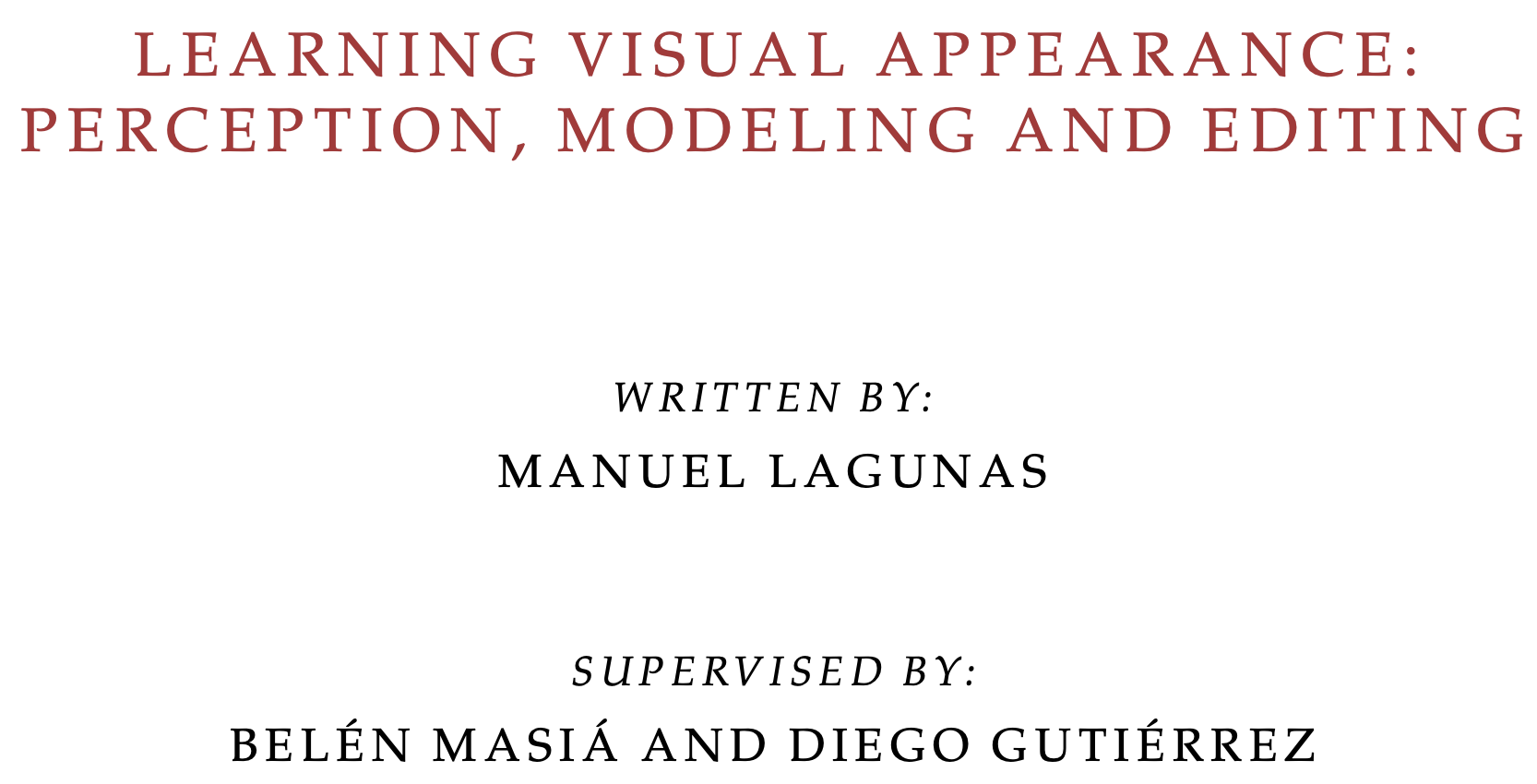

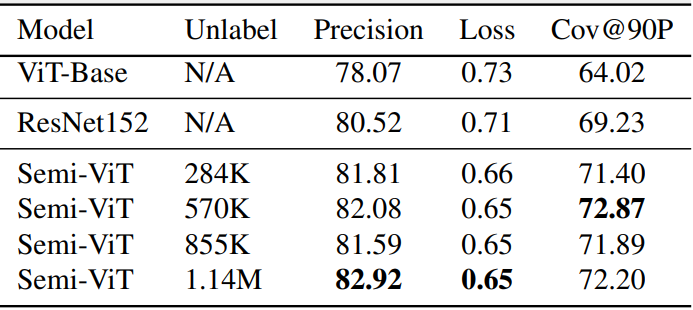

Brayan Impata*, Manuel Lagunas*, Victor Martinez, Virginia Fernandez, Christos Georgakis, Sofia Braun, Felipe Bertrand CVPR 2024 Workshops: Fine-Grained Visual Categorization (FGVC), 2024 Project page · Paper · Bib Semi-ViT enhances e-commerce product attribute extraction by significantly improving precision and coverage compared to fully supervised models, even with 25% less labeled data. |

|

Manuel Lagunas*, Brayan Impata*, Victor Martinez Gomez, Virginia Fernandez, Christos Georgakis, Sofia Braun, Felipe Bertrand CVPR Workshops: LXAI, 2023 Project page · arXiv · Bib We explore Semi-ViT for fine-grained classification with scarce annotated data. Semi-ViT outperforms traditional models and ViTs, making it effective for e-commerce applications. |

|

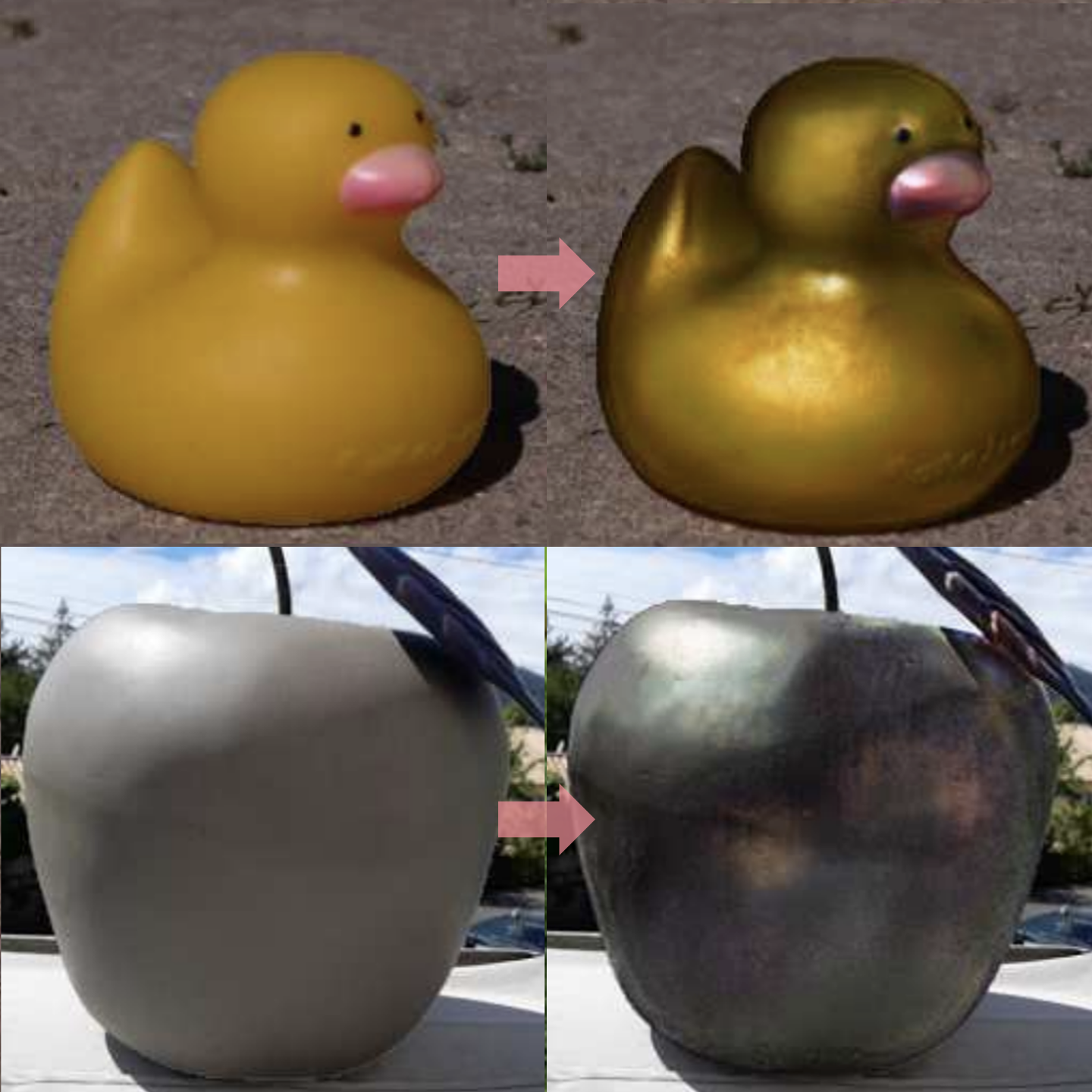

J. Daniel Subias, Manuel Lagunas Computer Graphics Forum (CGF, proc. Eurographics), 2023 arXiv · Code · Models and data · Bib We perform in-the-wild intuitive material editing using perceptual attributes. We recover high frequency details from the input while keeping the intuitive editing capabilities of the model. |

|

Johanna Delanoy, Manuel Lagunas, Jorge Condor, Diego Gutierrez, Belen Masia Computer Graphics Forum (CGF), 2022 Project page · arXiv · Code · Bib We rely on an estimation of the geometry from the input image and an editing network that uses high-level perceptual attributes to perform intuitive material editing. |

|

Manuel Lagunas, Xin Sun, Jimei Yang, Ruben Villegas, Jianming Zhang, Zhixin Shu, Belen Masia, Diego Gutierrez Eurographics Symposium on Rendering (Proc. EGSR), 2021 arXiv · Code · Bib We train a generative model to perform in-the-wild human relighting lifting the assumptions on materials being Lambertian. |

|

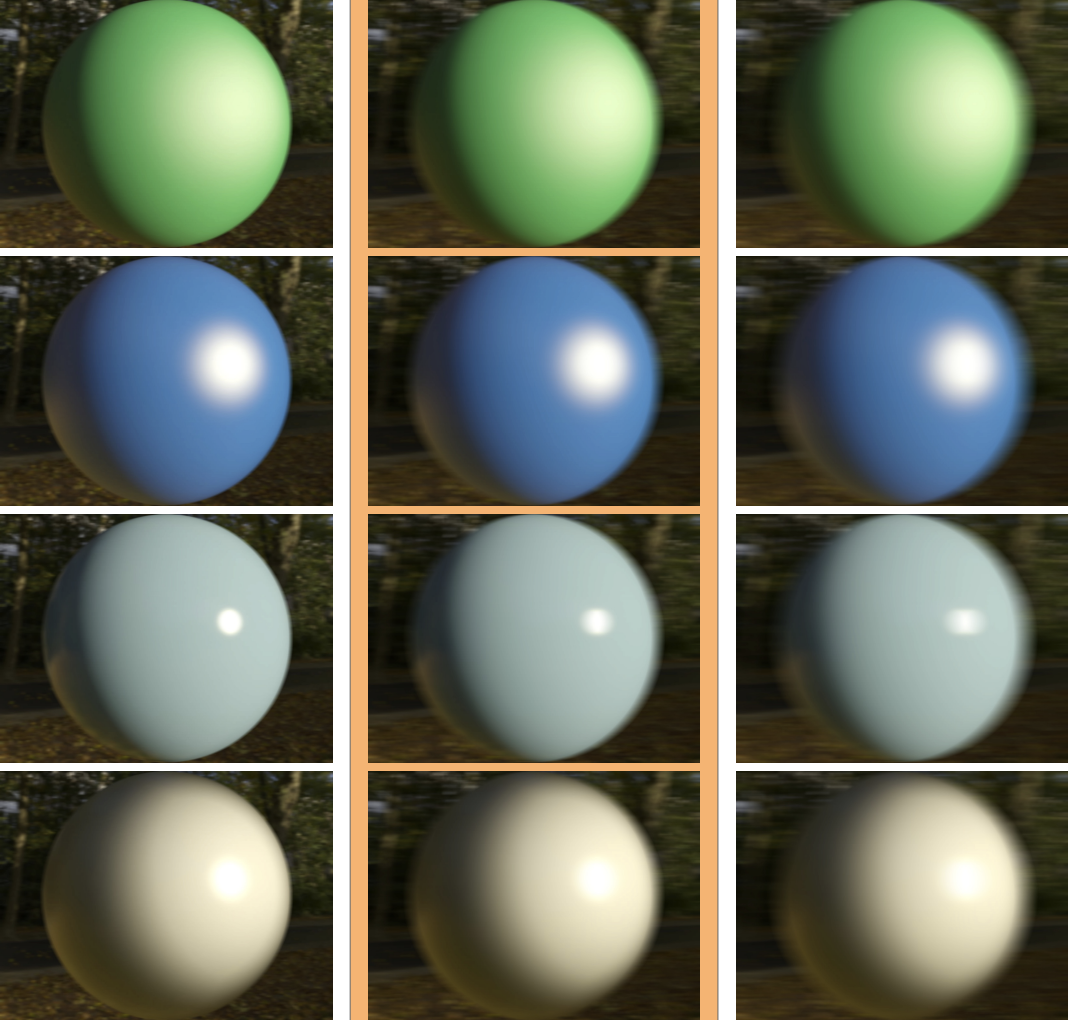

Manuel Lagunas, Ana Serrano, Diego Gutierrez, Belen Masia Journal of Vision (JoV), 2021 Project page · arXiv · Bib Comprehensive study on the influence of geometry, illumination, and their frequencies in our performance recognizing materials from images. |

|

Johana Delanoy, Manuel Lagunas, Ignacion Galve, Diego Gutierrez, Ana Serrano, Roland Fleming, Belen Masia ACM Transactions on Graphics Posters, 2020 Abstract · Poster Analysis of subjective and objective measures when we develop computational methods for material similarity. |

|

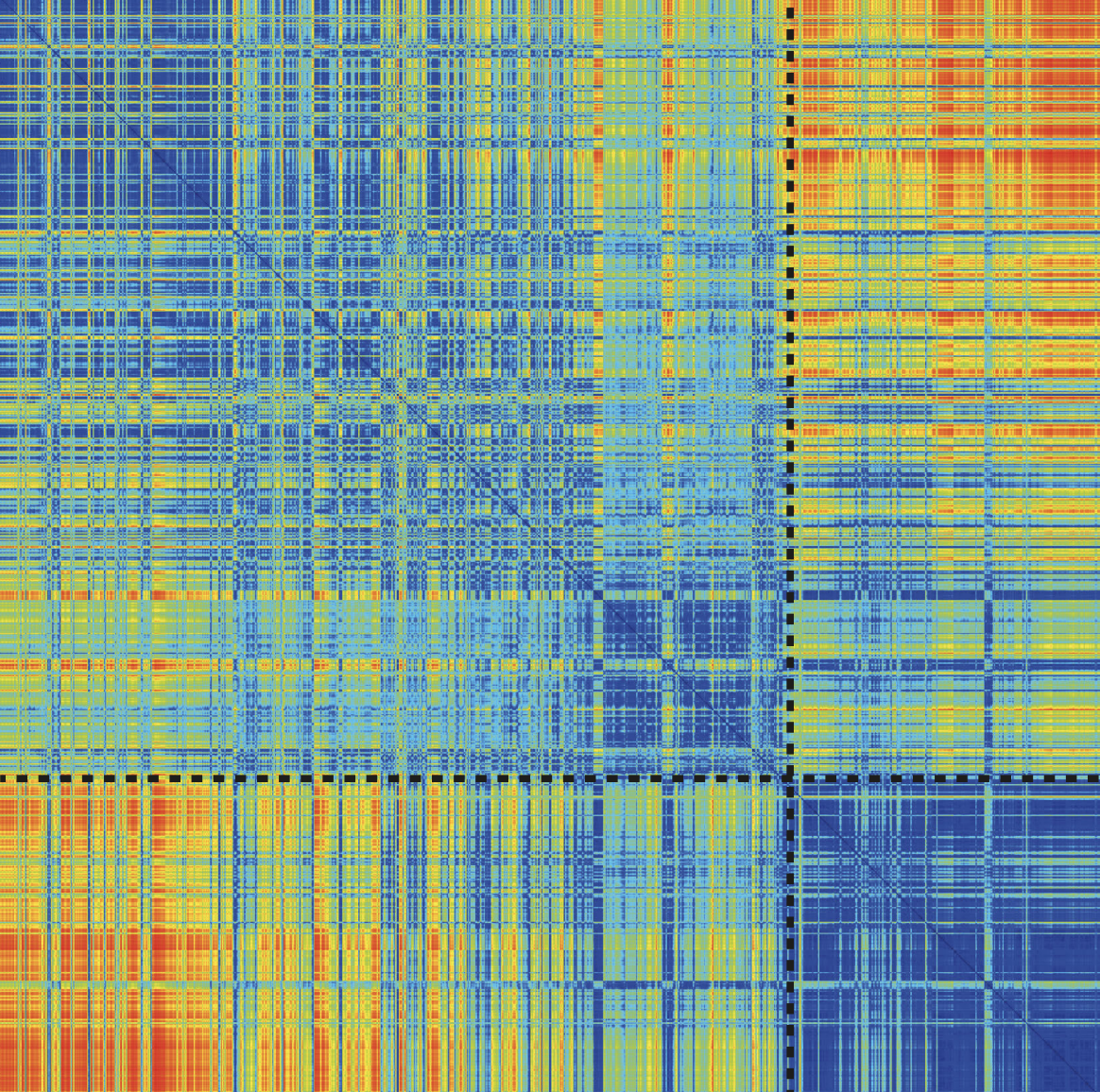

Manuel Lagunas, Sandra Malpica, Ana Serrano, Elena Garces, Diego Gutierrez, Belen Masia ACM Transactions on Graphics (TOG, Proc. SIGGRAPH), 2019 Project page · Arxiv · Code · Bib We introduce a neural-based similarity metric that learns from perceptual data. It outperforms state of the art, is aligned with human perception, and can be used for several applications. |

|

Ruiquan Mao, Manuel Lagunas, Belen Masia, Diego Gutierrez ACM Symposium on Applied Perception (SAP), 2019 Pdf · Bib Comprehensive study of the effect of motion in our perception of high-level perceptual attributes that describe material appearance. |

|

|

Manuel Lagunas, Elena Garces, Diego Gutierrez Multimedia Tools and Applications (MTAP), 2018 Arxiv · Bib We introduce a similarity model capable of retrieving icons based on their style and visual identity. We rely on a siamese model paired with a triplet loss function that learns from crowd-sourced data. |

|

Manuel Lagunas, Elena Garces Conferencia Española de Informatica Grafica (Proc. CEIG), 2017 Arxiv · Code · Bib We develop a transfer learning method that fine tunes the initial layers of a convolutional neural network. This allows it to learn low-level features (strokes) wich are important in illustrations. |

|

|

|

Feel free to steal this website's source code. Do not scrape the HTML from this page itself, as it includes analytics tags that you do not want on your own website — use the github code instead. Also, consider using Leonid Keselman's Jekyll fork of this page. |